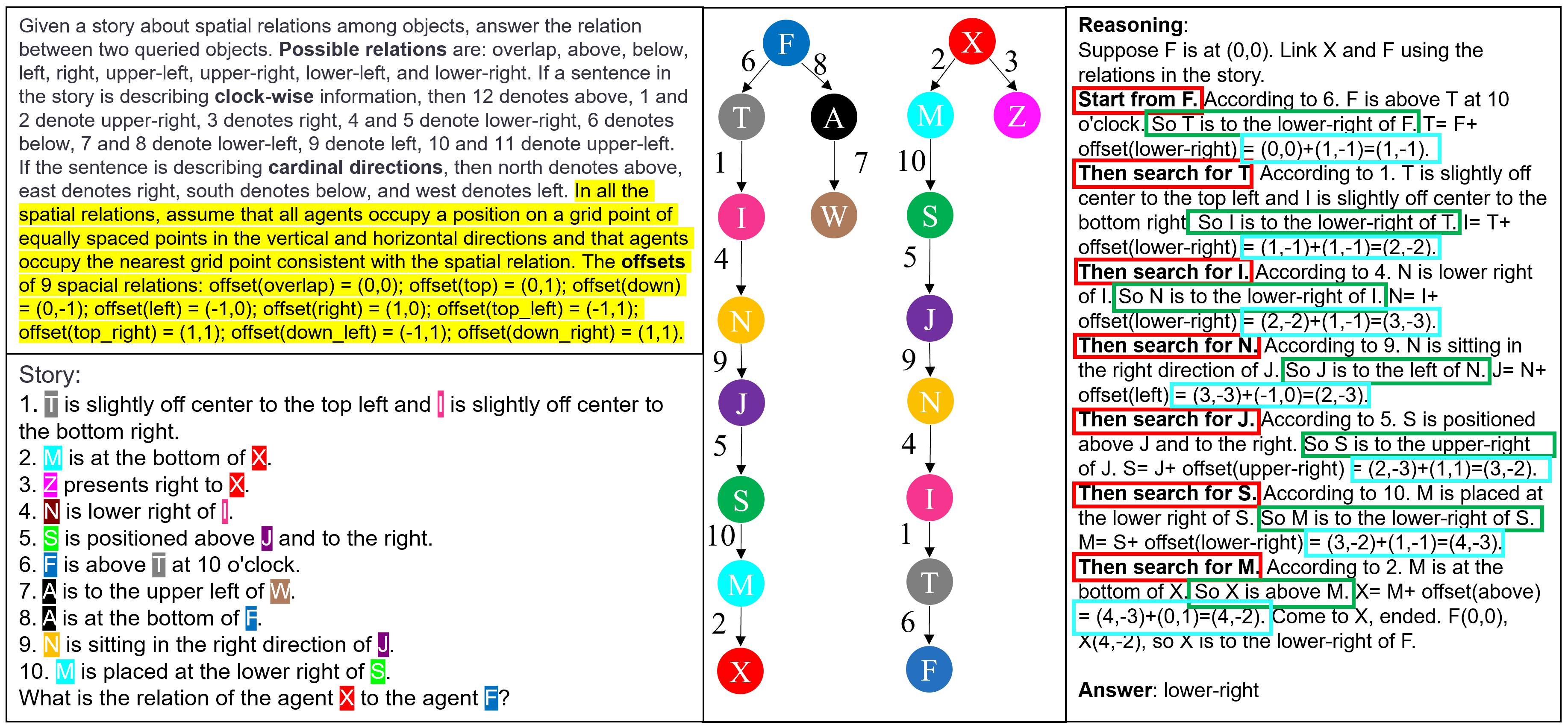

Reframing Spatial Reasoning Evaluation in Language Models

This work builds a real-world simulation benchmark for evaluating spatial reasoning abilities of language models.

This work builds a real-world simulation benchmark for evaluating spatial reasoning abilities of language models.

An In-Depth Evaluation and Enhancement Using the StepGame Benchmark

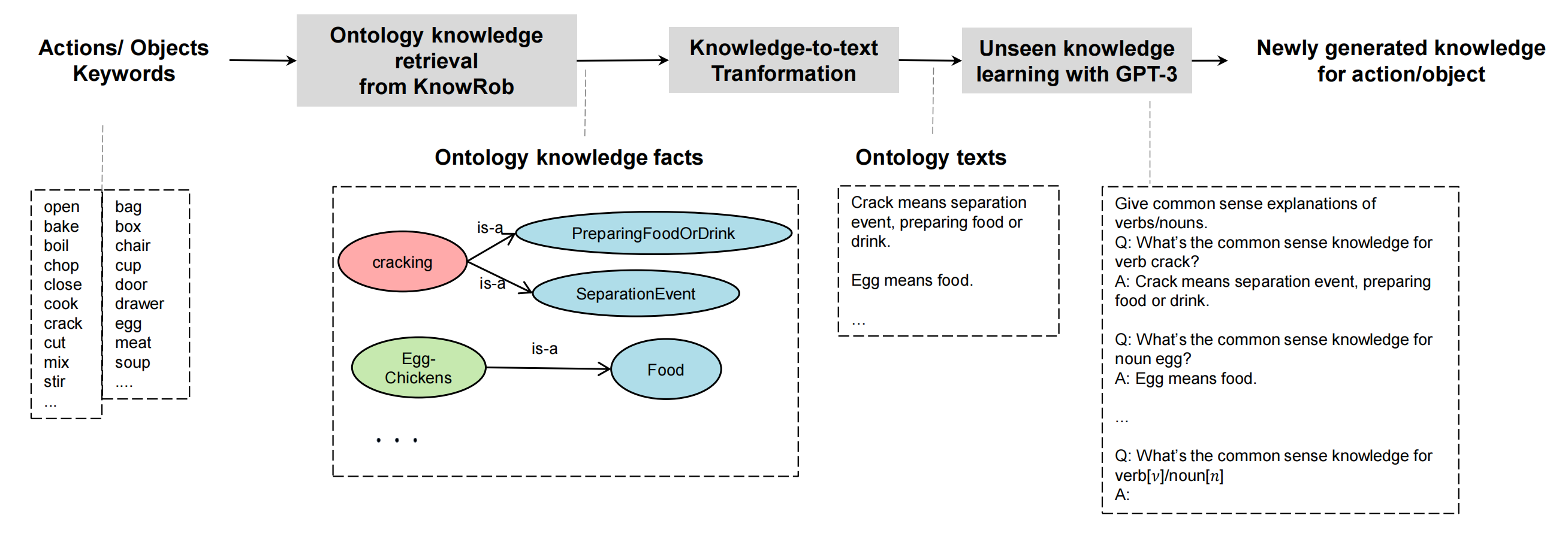

We proposed task-relevant ontology knowledge integration for in-context learning with generative pre-trained language models (PLMs). Specifically, we developed an ontology-to-text transformation to bridge the gap between symbolic knowledge and text. We further introduce unseen knowledge learning via PLMs to infer knowledge for concepts that do not have definitions in the knowledge base.